In our blog “An Easy Data Migration Is There Such A Thing?” we looked at some of the complexities of undertaking a data migration. We concluded that whilst it is true that data can be migrated between Netezza systems using the nz_migrate command, getting your data from A to B is really only a small part of the overall data warehouse migration project. The remainder is the complex work of a QA team, and it requires skill, expertise, tools, and resources.

When planning to upgrade your legacy Netezza system to IBM® Netezza® Performance Server for Cloud Pak for Data, amongst others you should ask yourself two important questions:

- How much downtime is acceptable to the Business?

- Will you need additional resources to complete the project?

What is an acceptable period of downtime?

When it comes to business continuity planning, most enterprises will have established how much downtime is acceptable in the event of a system failure, and will have agreed Recovery Time and Recovery Point Objectives with the IT department. If that hasn’t been done, it’s quite important to do so before you migrate any key system onto a new platform, as the need for business continuity doesn’t get put on hold just because you are upgrading your hardware.

When migrating your Netezza system to a cloud platform, you must plan for the time it will take to transfer your data to it. It’s a simple mathematical problem, the more data you have, and the slower your transfer speed, the longer it will take. To give you an idea, transferring 10 terabytes of data over a network at 100 megabits per second takes almost 10 days. Most data warehouses have a lot more than 10 Tb of data, and 100 mb/s is not an uncommon speed at which to do it. A large percentage of your data will be static, but there will be some tables that are constantly being changed and at some point, you must stop doing database changes on the old, and cut over to the new. This is where an outage window will be required to apply any remaining changes to the new system. If this is planned badly, you risk a much longer outage than you expected.

So, this is your first challenge, planning how to get all your data into your new platform whilst minimising the negative impact on the business and inconvenience to the users.

Based on our experience, however, your biggest challenge comes after the data has been successfully migrated to the new system, in particular:

- Verifying your data integrity

- Recreating cross-database objects

- Replicating user permissions

Data Integrity Testing

It’s hard to imagine that any company would perform a system upgrade involving a full data migration without performing data validation testing, regardless of claims by the vendor that the new environment is fully compatible. As a minimum, you should:

- Verify that all the required data was transferred according to the requirements.

- Verify that the destination tables were populated with accurate values.

- Verify the performance of custom scripts and stored procedures, including checks that cross-table objects have been correctly restored.

Proper testing takes time and resources, so this needs to be factored into the migration project.

Recreating cross-database objects

Cross-database objects are views and stored procedures that reference multiple databases. They are problematic because they must be rebuilt in the new environment, and this can’t happen until the referenced databases have been fully migrated. This means that any queries that contain these cross-database objects won’t be available to users until the rebuild has occurred, and this takes time.

Replicating user permissions

Replicating your user permissions to the new system can also be problematic. There is no simple way to allocate permissions, they must be done manually or using a third-party utility such as Smart Management Frameworks Smart Access Control module. If you have hundreds of users and you elect to do this manually you have a big job on your hands and your valuable Netezza resources can become bogged down with this chore – it’s explained by IBM here. So, your system may be fully migrated, but your users will not be able to access the system until they have been granted the right access permissions. It’s also worth remembering that maintaining user permissions manually is inherently very risky and can result in accidental data leakages – read our blog about synchronising Netezza with Entra ID.

What dedicated Netezza resources are on hand?

If you have a small dedicated Netezza team, which is becoming the norm nowadays, you will need external help if you want to avoid the risk of a prolonged outage, especially given the number of post migration tasks that need to be done that we have just described. Even if you have a larger Netezza team available, some system downtime is unavoidable for users that require real time data. If you are planning to enhance your Netezza team with external resources, the costs involved are not insignificant, especially for a prolonged period which may be necessary for larger Netezza implementations.

What’s an alternative to using nz_migrate?

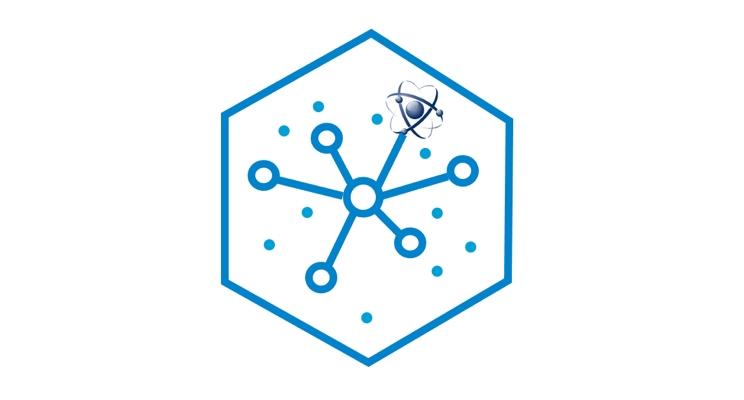

A migration to CP4D can, in fact, be done with virtually no downtime at all if you set up Netezza replication in advance of your system upgrade and run your two systems in parallel with your data fully replicated before cutting over to your new CP4D system. This can be done with the Smart Database Replication module of Smart Management Frameworks. It allows you to use your existing Netezza resources and obviates the need to do post-migration activities that are the usual cause of user downtime.

Here is a diagram that illustrates the point:

If you’d like to see what your migration to CP4D will entail, please feel free to contact us with any questions.